Why India is seeing a rise in oral cancer cases

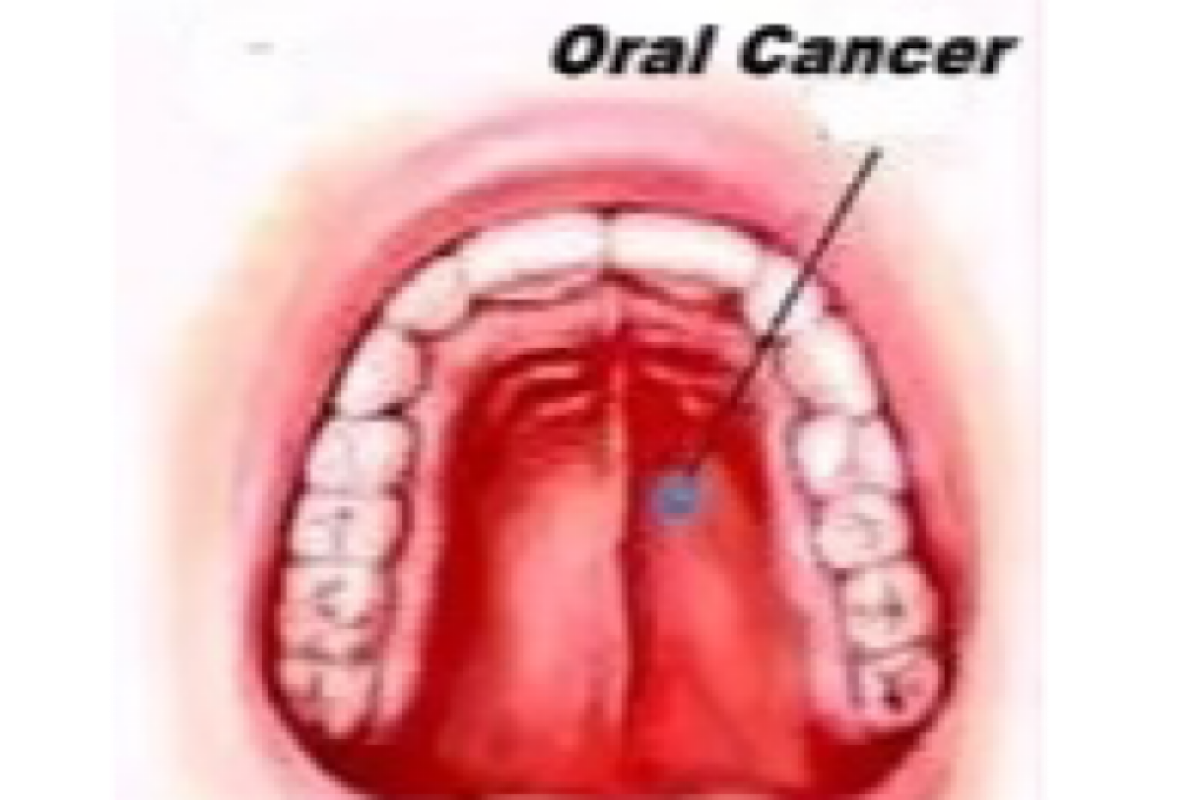

India bears a significant burden of oral cancers, and the country contributes to about 30 per cent of all global cases, said doctors on Tuesday.

India bears a significant burden of oral cancers, and the country contributes to about 30 per cent of all global cases, said doctors on Tuesday.

Disc replacement surgery may be a game-changer for people experiencing severe neck or back pain, said billionaire Elon Musk on Tuesday.

Amid increased interest in cloud engineering, a new study on Thursday showed that the method, while effective in cooling the climate, can work only as a painkiller, not a solution.

Rural healthcare in India has seen remarkable progress in the last decade, with people living in remote areas getting better access to quality health services.

Artificial Intelligence (AI) will lead the US Presidential elections in 2032, said Tesla and SpaceX CEO Elon Musk.

Apple on Thursday announced an enhancement to repair processes that will enable customers and independent repair providers to utilise “used Apple parts in repairs” for select iPhone models.

Regular consumption of plain yoghurt may help people to reduce their risk of diabetes and also reduce insulin resistance, said doctors here on Sunday.

India must focus on getting Israel-like ironclad defence systems, said Mahindra Group Chairman Anand Mahindra on Sunday.

Sam Altman-run OpenAI on Friday said it has made its AI chatbot called ChatGPT more direct and less verbose.

These smartphones provide consumers with high-end features without the hefty price tag, making them an attractive option for many.